- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

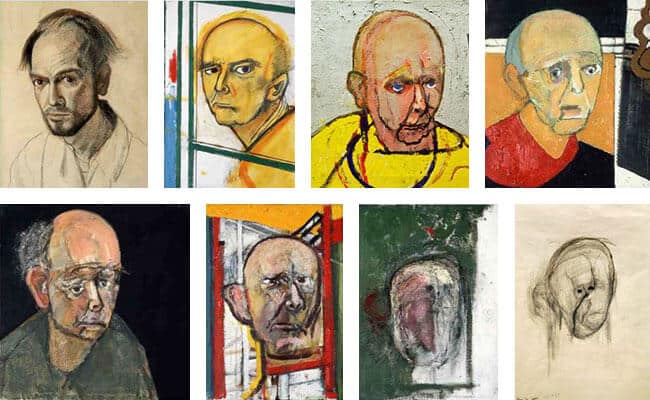

It’s like that painter who kept doing self-portraits through alzheimers.

we have to be very careful about what ends up in our training data

Don’t worry, the big tech companies took a snapshot of the internet before it was poisoned so they can easily profit from LLMs without allowing competitors into the market. That’s who “We” is right?

It’s impossible for any of them to have taken a sufficient snapshot. A snapshot of all unique data on the clearnet would have probably been in the scale of hundreds to thousands of exabytes, which is (apparently) more storage than any single cloud provider.

That’s forgetting the prohibitively expensive cost to process all that data for any single model.

The reality is that, like what we’ve done to the natural world, they’re polluting and corrupting the internet without taking a sufficient snapshot — just like the natural world, everything that’s lost is lost FOREVER… all in the name of short term profit!

The retroactive enclosure of the digital commons.

GOOD.

This “informational incest” is present in many aspects of society and needs to be stopped (one of the worst places is in the Intelligence sector).

Informational Incest is my least favorite IT company.

WHAT ARE YOU DOING STEP SYS ADMIN?

Too bad they only operate in Alabama

Damn. I just bought 200 shares of ININ.

they’ll be acquired by McKinsey soon enough

A few years ago, people assumed that these AIs will continue to get better every year. Seems that we are already hitting some limits, and improving the models keeps getting harder and harder. It’s like the linewidth limits we have with CPU design.

I think that hypothesis still holds as it has always assumed training data of sufficient quality. This study is more saying that the places where we’ve traditionally harvested training data from are beginning to be polluted by low-quality training data

It’s almost like we need some kind of flag on AI-generated content to prevent it from ruining things.

If that gets implemented, it would help AI devs and common people hanging online.

File it under “too good to happen”. Most writing jobs are proofreading AI-generated shit these days. We’ll need to wait until there’s real money in writing scripts to de-pollute content.

No they are increasingly getting better, mostly they fit in a bigger context of other discoveries

no, not really. the improvement gets less noticeable as it approaches the limit, but I’d say the speed at which it improves is still the same. especially smaller models and context window size. there’s now models comparable to chatgpt or maybe even gpt 4.0 (I don’t remember, one or the other) with context window size of 128k tokens, that you can run on a GPU with 16gb of vram. 128k tokens is around 90k words I think. that’s more than 4 bee movie scripts. it can “comprehend” all of that at once.

AI like:

that shit will pave the way for new age horror movies i swear

From the article:

To demonstrate model collapse, the researchers took a pre-trained LLM and fine-tuned it by training it using a data set based on Wikipedia entries. They then asked the resulting model to generate its own Wikipedia-style articles. To train the next generation of the model, they started with the same pre-trained LLM, but fine-tuned it on the articles created by its predecessor. They judged the performance of each model by giving it an opening paragraph and asking it to predict the next few sentences, then comparing the output to that of the model trained on real data. The team expected to see errors crop up, says Shumaylov, but were surprised to see “things go wrong very quickly”, he says.

How many times did you say this went through a copy machine?

I wonder if the speed at which it degrades can be used to detect AI-generated content.

I wouldn’t be surprised if someone is working on that as a PhD thesis right now.

how are you going to write a thesis on writing a FLAC to disc and ripping it over and over?

By measuring how it does with real images vs generated ones to start. The goal would be to show a method to reliably detect ai images. Gotta prove that it works.

How would it detect, you would need the model and if you do you can already detect

It’s an issue with the machine learning technique, not the specific model. The hypothetical thesis would be how to use this knowledge in general.

Why are you so agitated by my off hand comment?

Am I agitated? 😂 💜 You it’s not with all models no

literally just the difference between flac and mp3 as it were digital conversion noise with a little bot behind it

Huh. Who would have thought talking mostly or only to yourself would drive you mad?

The Habsburg Singularity

I only have a limited and basic understanding of Machine Learning, but doesn’t training models basically work like: “you, machine, spit out several versions of stuff and I, programmer, give you a way of evaluating how ‘good’ they are, so over time you ‘learn’ to generate better stuff”? Theoretically giving a newer model the output of a previous one should improve on the result, if the new model has a way of evaluating “improved”.

If I feed a ML model with pictures of eldritch beings and tell them that “this is what a human face looks like” I don’t think it’s surprising that quality deteriorates. What am I missing?

In this case, the models are given part of the text from the training data and asked to predict the next word. This appears to work decently well on the pre-2023 internet as it brought us ChatGPT and friends.

This paper is claiming that when you train LLMs on output from other LLMs, it produces garbage. The problem is that the evaluation of the quality of the guess is based on the training data, not some external, intelligent judge.

ah I get what you’re saying., thanks! “Good” means that what the machine outputs should be statistically similar (based on comparing billions of parameters) to the provided training data, so if the training data gradually gains more examples of e.g. noses being attached to the wrong side of the head, the model also grows more likely to generate similar output.

Part of the problem is that we have relatively little insight into or control over what the machine has actually “learned”. Once it has learned itself into a dead end with bad data, you can’t correct it, only work around it. Your only real shot at a better model is to start over.

When the first models were created, we had a whole internet of “pure” training data made by humans and developers could basically blindly firehose all that content into a model. Additional tuning could be done by seeing what responses humans tended to reject or accept, and what language they used to refine their results. The latter still works, and better heuristics (the criteria that grades the quality of AI output) can be developed, but with how much AI content is out there, they will never have a better training set than what they started with. The whole of the internet now contains the result of every dead end AI has worked itself into with no way to determine what is AI generated on a large scale.

It takes a massive number of intelligent humans that expect to be paid fairly to train the models. Most companies jumping on the AI bandwagon are doing it for quick profits and are dropping the ball on that part.

deleted by creator

And screenshotting a jpeg over and over again reduces the quality?

interesting refinement of the old GIGO effect.

Deadpool spoilers

I find it surprising that anyone is surprised by it. This was my initial reaction when I learned about it.

I thought that since they know the subject better than myself they must have figured this one out, and I simply don’t understand it, but if you have a model that can create something, because it needs to be trained, you can’t just use itself to train it. It is similar to not being able to generate truly random numbers algorithmically without some external input.

Sounds reasonable, but a lot of recent advances come from being able to let the machine train against itself, or a twin / opponent without human involvement.

As an example of just running the thing itself, consider a neural network given the objective of re-creating its input with a narrow layer in the middle. This forces a narrower description (eg age/sex/race/facing left or right/whatever) of the feature space.

Another is GAN, where you run fake vs spot-the-fake until it gets good.